Why choose our Data Analyst apprenticeship?

QA's Data Analyst Level 4 apprenticeship develops the skills needed to collect, organise and study data to provide valuable business insight.

The principles of data analytics are being applied across just about every industry. Using past-event data, analysts are making important insight-based business decisions and driving customer value across every team and function, including operations, finance, sales and marketing.

At QA we have deep-rooted expertise in Data, Analytics and AI. Our solutions transform the way that individuals use data and enable organisations to make more data-driven business decisions.

QA's Data Analyst Level 4 apprenticeship programme enables your organisation to:

- Build the skills and capabilities you need throughout your organisation to analyse, interrogate and present technical data, providing informed and valuable business insights to a range of stakeholders.

- Upskill or reskill your existing workforce with data skills and create analysts for the modern day workplace.

- Recruit and harness a new talent pathway: QA can help you cost-effectively recruit diverse, ambitious talent into your business and help you build a pipeline of data literacy talent.

Delivered by industry experts with real-world experience, the programme’s content has been designed around real-life skills and includes the additional PL-300 Microsoft Power BI Data Analyst certification (see below). The technical content aligns to and is relevant to employers and the market.

Upon successful completion, learners will be awarded the Data Analyst Level 4 apprenticeship.

Tools and technologies learned

Learners will learn to use visualisation tools such as (PowerBI, Tableau), SQL Server, SSIS, Python and R programming languages, and Cloud Technologies such as: Azure, AWS, GCP.

Why choose QA

30,000

Tech careers started or enhanced through apprenticeships

93%

Success rate for learners on the Data Analyst Level 4 programme

5 minutes

Response time to learner queries

Under 24 hours

Feedback provided on learner submissions

100,000

Applicants apply to our apprenticeship programmes every year

Highest overall pass rate

Among UK tech training providers

Gold Award

Best Use of Blended Learning at the Learning Tech Awards 2020

Committed to social mobility, diversity and inclusion

Key partners include Barnardo's, Stemettes and Code First Girls

91%

of QA apprentices go straight into full-time jobs2.1 million

jobs in the digital economy£42,578

average skilled digital salaryThe Data Analyst Level 4 programme overview

Learners benefit from a flexible and blended learning journey, helping them apply technical data solutions to a range of real-life scenarios – gaining valuable workplace skills faster.

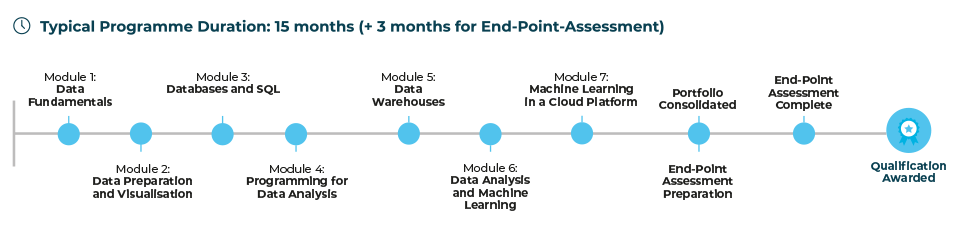

The typical duration of the Data Analyst Level 4 programme is 15 months, plus a 3-month end-point assessment. View the modules in order below, including Data Fundamentals, Data Preparation and Visualisation, Databases and SQL, Programming for Data Analysis, Data Warehouses, Data Analysis and Machine Learning and Machine Learning in a Cloud Platform.

This apprenticeship programme also includes the option to achieve Microsoft Power BI Data Analyst certification, helping apprentices to understand various methods and best practices for modelling, visualising and analysing data with Power BI.

Entry requirements

Standard entry:

- Level 3 qualification (apprenticeship/A-levels/BTEC, etc)

- OR equivalent work experience (typically two years in a relevant role)

Plus:

- 5 GCSEs, including English and Maths at Grade 4 (C) or above

- Experience with using Excel (in particular pivot tables and XLookup) and Microsoft products (or similar)

Please see the programme guide for full entry requirements.

Possible job roles for a Data Analyst apprentice

This programme is suitable for multiple roles across finance, marketing, operations, people, procurement and sales. The Data Analyst's role involves operating within the company's data architecture to guarantee that data is managed compliantly, securely, and in accordance with company policies and regulations.

Typical job roles suitable for this apprenticeship are: Data Analyst, Data Insight Analyst, Data Visualisation Engineer, Business Intelligence Analyst.

Associated fields include; Data Engineer, Data Scientist, DevOps or Software Development with further training

Employer funding

The Data Analyst Level 4 programme can be funded using the apprenticeship levy, requiring you to only pay up to 5% of the programme.

What our learners say

"The learning material I’ve covered has been really in-depth and easy to navigate."

Useful links

Looking to become an apprentice?

Your first step is to apply for one of our apprentice jobs.

Start by clicking here and choose the relevant filter to see our current vacancies:

Partnering with you to power potential

We can work together to help recruit tech and digital apprentices or help reskill your existing workforce and provide your business with the in-demand tech skills you need.

Get in touch with us. Our team will respond within 48 hours.

Frontloaded option available

This apprenticeship is also available as a frontloaded apprenticeship, which covers over 60% of the apprenticeship content within the first 10 weeks of the programme.